Apple is currently developing three new categories of wearable devices, according to a recent report published by journalist Mark Gurman via Bloomberg.

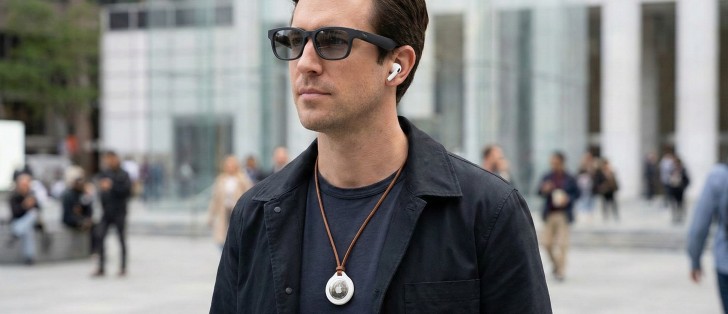

These devices include smart glasses, AirPods with cameras and expanded AI capabilities, as well as a smart necklace that can be attached to clothing or worn around the neck.

All of these products are based on the Siri voice assistant, which relies on understanding visual context to execute commands. The devices are designed to work in conjunction with the iPhone, and each will include cameras.

However, the AirPods and pendant cameras will come with low resolution, as their role will be limited to supporting artificial intelligence capabilities without taking actual photos or videos.

As for smart glasses, they target a higher category of users, as they will offer more advanced specifications and better cameras. Apple’s CEO, Tim Cook, indicated during an internal meeting that the company is working on new categories of products supported by artificial intelligence, stressing that the world is changing rapidly, which prompts Apple to invest in new technologies.

As for the smart pendant, it will work as an accessory for the iPhone and not a standalone device, unlike previous attempts in the market that did not achieve success. It will act as the phone’s “eyes and ears”, with an always-on camera and microphone to interact with Siri.

However, it will not have the processing power of the Apple Watch, but rather it will be closer in performance to the AirPods. It is expected to be launched next year unless the project is cancelled.

Moving on to smart glasses, Apple plans to launch them next year as well, but they will not have a screen. Instead, it will feature dual speakers, microphones, and cameras; One is dedicated to capturing high-resolution images, and the other is for computer vision processing, with techniques similar to those used in Vision Pro. These technologies will enable glasses to understand the surrounding environment and accurately measure distances between objects.

Through these glasses, Apple aims to provide an artificial intelligence companion that works throughout the day, as the user will be able to look at a specific item and ask Siri about it, or get immediate assistance with daily tasks. It will also allow adding event data from stickers directly to the calendar, and creating contextual reminders, such as alerting the user to pick up a specific item when standing in front of a specific shelf in a store.

In navigation, Siri will rely on real-world landmarks, directing the user to walk next to a described vehicle or building before turning. However, the effectiveness of these features depends on Apple’s success in providing an attractive design and a smooth user experience, especially since many of these functions can already be implemented via a smartphone.

In general, it seems that Apple is seeking to discover the next major technology category, especially after the Vision Pro did not achieve the expected spread. The question remains about the extent to which these new devices can convince users that they offer real value beyond what their current devices provide.